OpenAI ChatGPT Updates New Safety Features Amid Lawsuit: Can It Prevent Overdose & Violence?

2026-05-15

OpenAI ChatGPT is back in the spotlight after OpenAI introduced updated safety features to detect signs of self-harm risk and potential violence in conversations. The update comes as the company faces lawsuits and investigations related to chatbot interactions that allegedly pose risks to users.

The key question is simple: can the new safety features prevent overdose and violence? The answer is that these features can help detect risks earlier, but they cannot be seen as a guarantee of full prevention.

Key Takeaways

- OpenAI ChatGPT has added safety features to identify signs of self-harm and potential danger to others based on conversation context.

- Temporary safety summaries are used to read risk patterns in sensitive conversations without being claimed as permanent memory.

- The new features can serve as an added layer of protection, but they do not replace professional help, emergency services, or human supervision.

Sign up on Bittime now and start crypto trading through a fast, secure, and easy process in the app.

OpenAI ChatGPT and New Safety Features: What Has Changed?

OpenAI ChatGPT is said to have added safety capabilities that are more sensitive to conversation context. The focus is on high-risk situations, such as signs of self-harm, conversations related to suicide, overdose, or potential violence.

The main change lies in how the system reads risks that develop over time. Previously, AI systems were often judged by their response to a single message. In the latest update, the context of sensitive conversations is also taken into account.

Temporary Safety Summary in OpenAI ChatGPT

A temporary safety summary is a short summary used to capture safety context from sensitive conversations. The summary is not intended as permanent memory or general personalization.

The purpose of this feature is to help ChatGPT identify warning signs that may not be clear from a single message. For example, one sentence may seem ordinary, but it can become more serious if it appears after patterns of distress, harmful plans, or intent to self-harm.

OpenAI ChatGPT Amid Lawsuits: Why Is the Safety Update in the Spotlight?

The safety feature update has drawn attention because it comes amid legal pressure on OpenAI. Several lawsuits and investigations highlight allegations that ChatGPT was not safe enough when handling dangerous conversations.

Widely discussed cases include a California lawsuit related to a death from an accidental overdose and a federal lawsuit linked to a mass shooting case at Florida State University.

The claims in the lawsuits still need to go through the legal process, so readers need to distinguish between allegations, defenses, and proven facts.

Lawsuits, Investigations, and the Limits of Public Information

Public information clearly states that OpenAI is the developer of ChatGPT and that the platform uses generative AI models to respond to user conversations. Information about safety features, model policies, and Trusted Contact is also available from official announcements.

However, there is not enough information to confirm that the new features can prevent all cases of overdose, self-harm, or violence. Such claims need to be checked carefully because AI systems still have detection limits, context limits, and the risk of misreading situations.

Read also: OpenAI Applies Age Prediction in ChatGPT: New Rules Protect Users Under 18

Can OpenAI ChatGPT Prevent Overdose?

OpenAI ChatGPT can help reduce risk by refusing harmful instructions, giving more careful responses, and directing users to safer help. However, the feature cannot guarantee overdose prevention.

Overdose often involves medical conditions, certain substances, dosage, response time, and emergency factors that require human assistance. AI should not be positioned as a replacement for doctors, counselors, family, or emergency services.

How Do Safety Features Read Signs of Self-Harm?

Safety features can look for signs such as severe distress, intent to self-harm, requests for dangerous methods, or conversation patterns that increasingly point to serious risk. In certain situations, the system can provide de-escalation responses and encourage users to seek help.

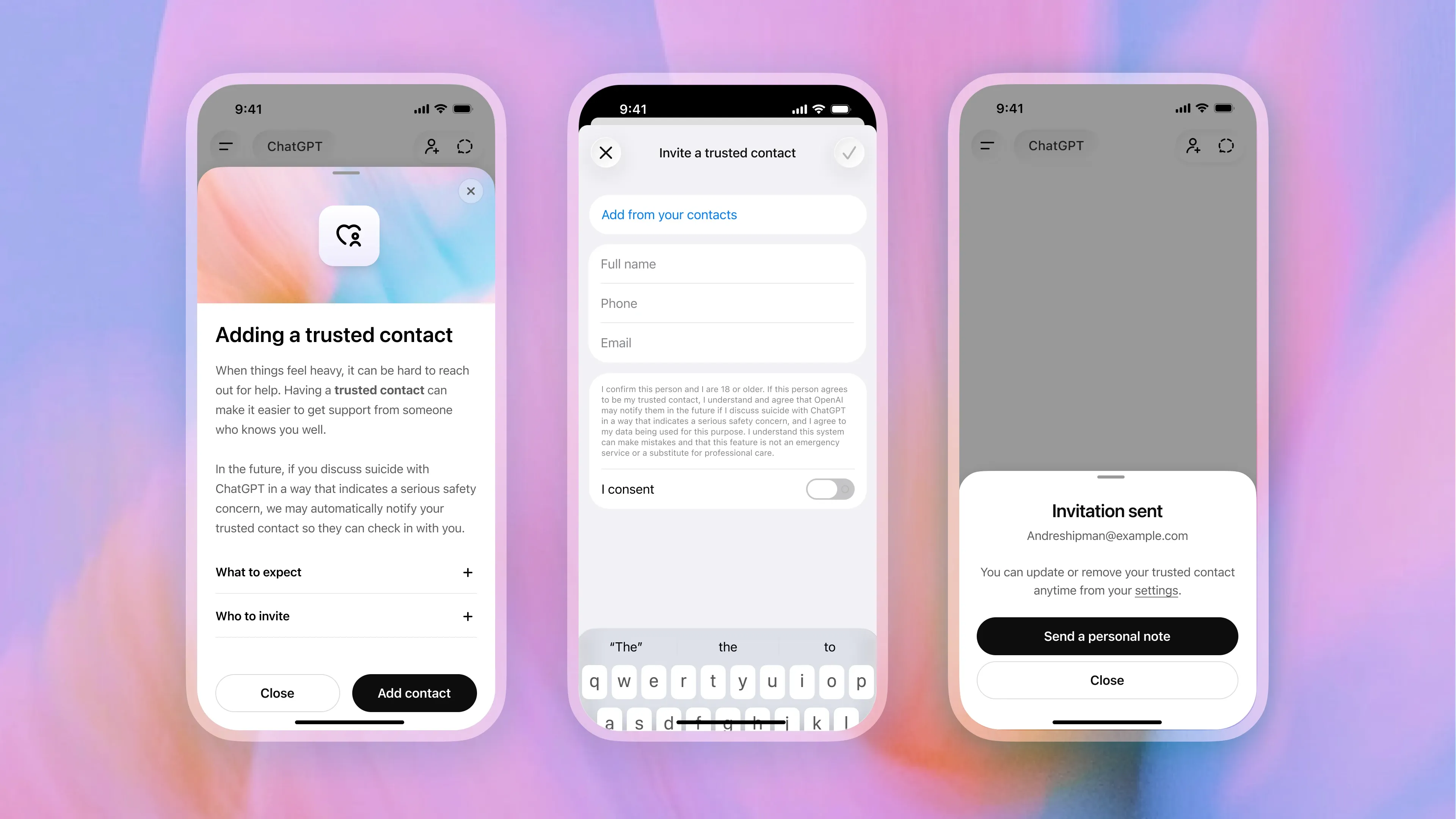

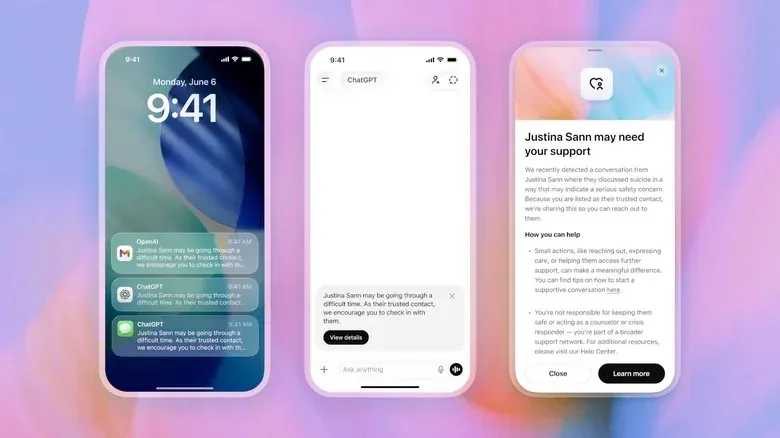

If the Trusted Contact feature is active, adult users can choose one trusted contact to receive a notification when the system and reviewers assess a serious safety risk. The contact should not receive the full conversation content, but rather a notification to check on the user immediately.

Can OpenAI ChatGPT Prevent Violence?

OpenAI ChatGPT can help hold back responses that could facilitate violence and recognize signs of intent to harm others. However, preventing real-world violence cannot depend on AI alone.

Violence is influenced by many factors, including access to dangerous tools, psychological conditions, social environments, and responses from authorities. AI safety is only one layer of protection in a broader ecosystem.

Mass Shooting and Harm-to-Others Risk

In the context of mass shootings, the safety update is intended to make the system more sensitive to conversations that point to harm-to-others. Harm-to-others means the potential for a user to endanger other people.

Features such as temporary safety summaries can help the system detect increasingly dangerous conversation patterns. However, their effectiveness still needs to be assessed through audits, independent evaluations, policy transparency, and regulatory oversight.

Read also: OpenAI Releases Memory Feature for ChatGPT Plus Users, Making It Smarter!

Trusted Contact, Privacy, and the Limits of User Safety

Trusted Contact gives adult users the option to add a trusted person as a contact who can be reached in cases of serious self-harm risk. The feature is optional and designed as a human connection channel when users may be in a crisis situation.

From a privacy perspective, the concept needs to be read carefully. Users need to understand who will be contacted, when notifications can be sent, what data is used, and whether the feature is available in their region.

Does ChatGPT Share Conversation Content?

Based on public explanations, trusted contacts do not receive full conversation transcripts. The notification functions as an alert so the contact can check on the user’s safety.

Even so, users are still advised to read the privacy settings directly. Policies, regional availability, and how the feature works may change as the product is updated.

The Impact of AI Safety on Users, Traders, and Crypto Asset Investors

Readers from the crypto asset community often use AI for research, trading plans, news analysis, and education. For this reason, AI safety matters not only for mental health issues but also for understanding the reliability limits of digital platforms.

When using AI for financial decisions, users still need to verify data from official sources, exchanges, project documents, and regulators. Chatbots can help summarize information, but they do not replace independent research or professional advice.

How to Use AI Safely for Market Research?

Use AI as a supporting tool, not as a single source. Ask for simple explanations, compare data, then double-check important figures before trading.

For sensitive topics such as health, safety, law, and investment, avoid following AI answers directly without verification. A cautious approach is far healthier than making quick decisions based on one chatbot response.

Conclusion

OpenAI ChatGPT has added safety features to read signs of self-harm, overdose risks, and potential violence through broader conversation context. Temporary safety summaries and Trusted Contact show a new direction in more proactive AI safety.

However, these features should not be understood as a guarantee of full prevention. Overdose, self-harm, and violence still require human support, professional help, emergency services, and policy oversight.

Before using an AI platform for sensitive matters, check its safety features, privacy settings, service limits, and official sources directly.

FAQ

What Is the Latest OpenAI ChatGPT Safety Update?

The latest update focuses on the ability to read self-harm risks and potential violence from conversation context, including through temporary safety summaries.

What Is a Temporary Safety Summary in ChatGPT?

A temporary safety summary is a short summary related to safety context in sensitive conversations, not permanent memory for general personalization.

Can ChatGPT Prevent Overdose?

ChatGPT can help refuse harmful instructions and encourage users to seek help, but it cannot guarantee overdose prevention or replace medical professionals.

What Is the Connection Between the California Lawsuit and OpenAI ChatGPT?

The California lawsuit refers to a lawsuit alleging that ChatGPT played a role in dangerous conversations related to an accidental overdose. The claim still needs to go through the legal process.

Is Trusted Contact Safe for User Privacy?

Trusted Contact is designed as an optional feature that sends notifications to trusted contacts without sharing full transcripts, but users still need to read the privacy settings directly.

Disclaimer: The views expressed belong exclusively to the author and do not reflect the views of this platform. This platform and its affiliates disclaim any responsibility for the accuracy or suitability of the information provided. It is for informational purposes only and not intended as financial or investment advice.